Table of Content

Overview

1. Request Ambiguity

2. Pathway Ambiguity

3. Data Retrieval Ambiguity

4. Data Extraction Ambiguity

5. Semantic Ambiguity

6. Composition Ambiguity

Silent failure is the default

Cumulative confidence decay

Feedback loops: learning from downstream failures

Design implications

About the Author

Taxonomy of Reliability Failures in GenAI Agentic Systems

Mapping the six silent failure points that undermine end-to-end reliability in enterprise AI agents

Overview

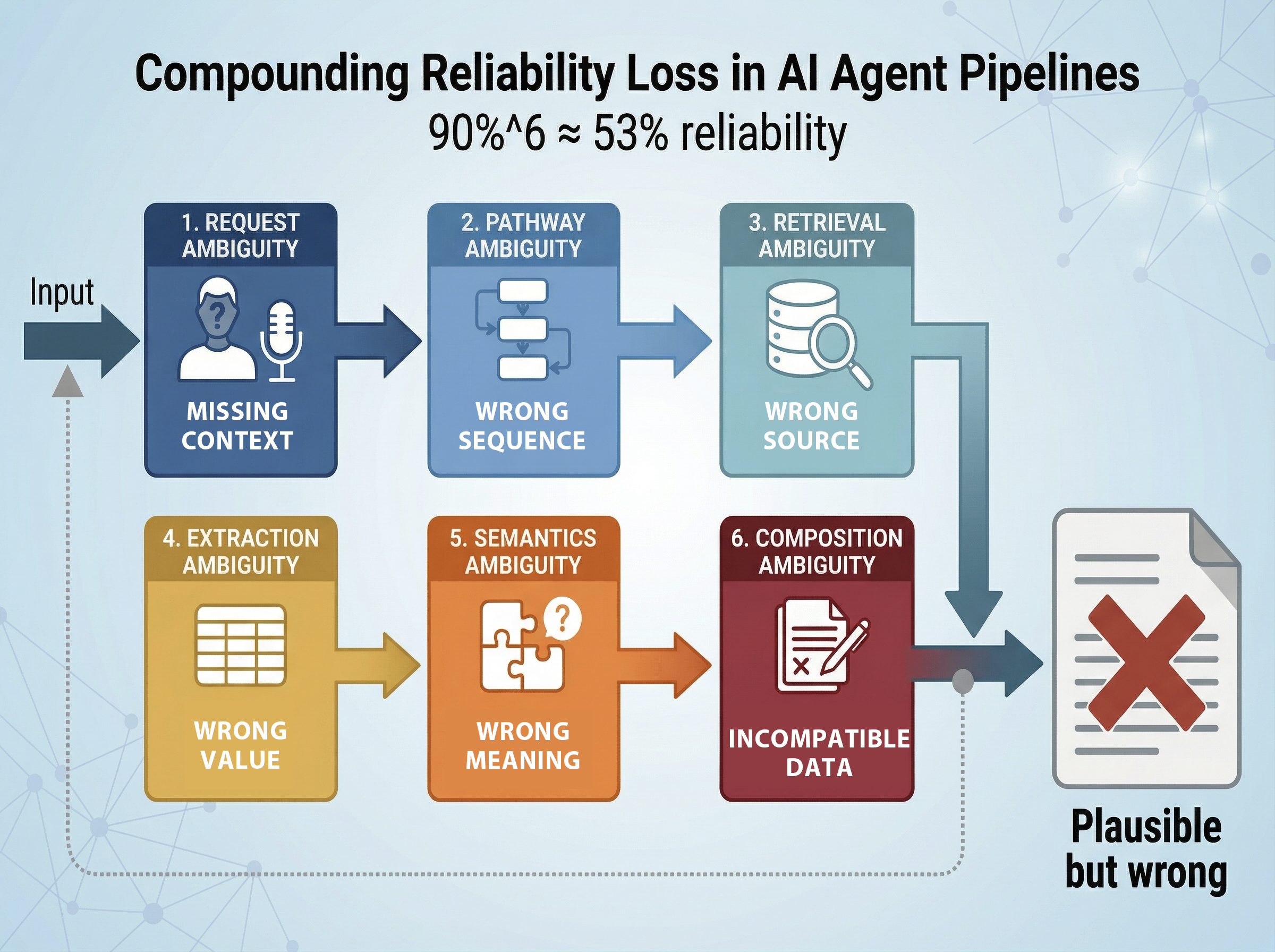

When professionals across functional units use GenAI agents equipped with tools to query data sources and knowledge bases, the reliability of output is subject to cumulative degradation across multiple stages. Each stage in the agent's pipeline introduces a probability of error, and because these stages are sequential, failures cascade — meaning overall system reliability is roughly the product of per-stage accuracy. An agent that is 90% accurate at each of six stages delivers a correct end-to-end result only ~53% of the time.

This taxonomy traces the lifecycle of an agentic request from formulation through final output, identifying six distinct failure modes. Critically, most of these failures are silent — the agent produces output that looks plausible but is subtly wrong, making traceability and auditability essential design considerations rather than afterthoughts.

1. Request Ambiguity

Stage: Request formulation by the user

The professional making the request often omits details that are "common sense" to a human colleague but not inferrable by an AI agent. This includes implicit assumptions about time periods, business units, metric definitions, data scope, or output format. Because the agent lacks this shared organizational context, it may construct queries to data sources or knowledge bases with missing or incorrect parameters, leading to retrieval of wrong data, files, or document chunks from the very first step.

Why it matters most: This is the highest-leverage failure point. An ambiguous request propagates errors through every downstream stage, and no amount of downstream precision can compensate for a fundamentally misunderstood intent.

Examples:

- "Pull the latest revenue numbers" — which entity, which fiscal period, which revenue definition (gross, net, recognized)?

- "Find the contract terms for Acme" — which contract, which version, which jurisdiction?

Mitigation approaches:

- Structured intake forms or templates that force specification of key parameters

- Clarification loops where the agent asks disambiguating questions before executing

- Organization-specific prompt scaffolds that inject default context (e.g., "unless specified, assume current fiscal year and consolidated figures")

2. Pathway Ambiguity

Stage: Agent planning and tool orchestration

Even when the request is well-understood, there may be ambiguity in the sequence of actions the agent should take. The agent must decide which tools to invoke, in what order, and how to handle conditional logic that depends on intermediate results. It may select an incorrect sequence, skip a necessary validation step, combine tool outputs inappropriately, or fail to branch correctly when an intermediate result changes the required approach.

Why it's hard: This is fundamentally a planning problem. Current LLM-based agents handle simple linear workflows adequately but struggle with conditional branching, especially when the branching conditions depend on data only available mid-execution. The risk is not just inefficiency — the agent follows a plausible-looking but incorrect path, and the user has no visibility into that routing decision.

Examples:

- The agent queries a database before checking whether the user has access permissions, returning data that should have been filtered

- A multi-step financial calculation requires currency conversion before aggregation, but the agent aggregates first

- The agent retrieves a document but skips a validation step to confirm it is the most current version

Mitigation approaches:

- Pre-defined workflow templates for common request patterns

- Explicit reasoning traces that log each planning decision for human review

- Guardrails that enforce mandatory steps (e.g., always check document version before extraction)

3. Data Retrieval Ambiguity

Stage: Fetching data from sources (structured and unstructured)

This failure mode is most pronounced with unstructured text and less common with well-structured databases. It manifests in two related but distinct ways.

Missing or incomplete metadata at the file level. Enterprise file systems are filled with invoices, reports, contracts, and other documents that lack consistent metadata — no standardized naming conventions, no reliable date tags, no entity or department labels, or metadata that has drifted out of sync with actual content. When an agent uses grep-like search or file-system traversal to locate the right document, missing metadata means it is essentially pattern-matching on filenames, folder paths, or sparse text snippets. This frequently leads to retrieval of the wrong file entirely — an invoice from the wrong vendor, a report from the wrong period, or a draft mistaken for a final version — with no signal to the agent that it has the wrong document in hand.

Loss of context at the chunk level. Even when the correct document is located, RAG systems that chunk documents for embedding may strip away essential context. Section headers, document titles, table captions, and surrounding paragraphs that clarify scope and meaning are often lost at chunk boundaries. The agent then retrieves a passage that appears relevant based on semantic similarity but is missing the qualifiers that would reveal it applies to a different business unit, time period, or product line.

Examples:

- An agent searching for "Acme Q3 invoice" retrieves a file named acme_invoice_final.pdf that is actually from Q2, because the filename contains no quarter indicator and the folder structure is flat

- Multiple versions of a report exist across shared drives with near-identical names; the agent selects an outdated draft because the metadata does not distinguish draft from final

- A RAG system retrieves a chunk discussing "Q3 margins" but the chunk boundary cuts off the paragraph specifying which business unit, leading the agent to assume it applies company-wide

- A keyword-based retrieval pulls a document that mentions a term in a different context (e.g., "Apple" the company vs. "apple" the commodity)

Mitigation approaches:

- Metadata enrichment pipelines that retroactively tag files with structured attributes (entity, date, document type, version, status) before they enter the agent's search scope

- Rich metadata tagging and consistent document taxonomy enforced at the point of creation

- Context-aware chunking strategies that preserve section headers, document titles, and surrounding context within each chunk

- Hybrid retrieval combining semantic search with metadata filters (e.g., restrict retrieval to documents tagged with the correct entity and fiscal period before running similarity search)

- Retrieval confidence scoring with thresholds below which the agent flags uncertainty rather than proceeding

- File deduplication and version control to reduce the chance of retrieving stale or duplicate documents

4. Data Extraction Ambiguity

Stage: Parsing and extracting specific values from retrieved content

Even when the correct document or chunk has been retrieved, extracting precise data points — particularly from unstructured formats — is error-prone. Numbers embedded in PDF tables, figures, footnotes, or complex layouts are especially vulnerable. Document processing pipelines may misparse table structures, confuse row-column alignment, misread OCR'd text, or fail to handle merged cells, multi-page tables, and nested headers.

Why it's dangerous: Table extraction from PDFs remains genuinely brittle even with modern tooling. The agent returns a number with full confidence, but it may be from the wrong row, the wrong table, or a misread digit — and nothing in the output signals this to the user.

Examples:

- A PDF table with merged header cells causes the parser to misalign values with their column labels

- A footnoted figure is extracted as the main value

- OCR misreads "1,234" as "1.234" due to font rendering artifacts

- A multi-page table is only partially extracted because the parser treats each page as independent

Mitigation approaches:

- Multiple extraction methods with cross-validation (e.g., compare OCR output against layout-based parsing)

- Confidence scoring on extracted values

- Human-in-the-loop verification for high-stakes numerical extractions

- Preprocessing pipelines that convert complex PDFs to structured formats before agent interaction

5. Semantic Ambiguity

Stage: Interpreting the meaning of extracted values

Even when extraction succeeds mechanically, the agent may misinterpret the semantic meaning of the data. This is particularly common with numbers in unstructured text, where the same figure might represent different things depending on context — units (thousands vs. millions), time frames (annual vs. quarterly), accounting treatments (GAAP vs. non-GAAP), or measurement definitions that vary across documents or departments.

Examples:

- A revenue figure is reported in thousands in one document and in millions in another; the agent treats both at face value

- "Operating income" in one source includes depreciation while another excludes it, but the agent treats them as equivalent

- A percentage is interpreted as a year-over-year change when it actually represents a proportion of total

Mitigation approaches:

- Unit and context normalization steps built into the extraction pipeline

- Ontology or glossary layers that map domain-specific terms to precise definitions

- Explicit labeling requirements where the agent must state the units, time period, and definition of any number it reports

6. Composition Ambiguity

Stage: Synthesizing outputs from multiple sources into a coherent response

This failure mode emerges when each individual retrieval and extraction step succeeds in isolation, but the agent incorrectly combines outputs drawn from different sources. The resulting synthesis may mix data from incompatible time periods, different organizational scopes, inconsistent methodologies, or mismatched definitions — producing an answer that is internally incoherent even though each component is individually correct.

Why it's distinct: This is not a failure of any single step but a failure of integration. It is especially insidious because every source citation checks out on inspection; the error lies in the act of combining them.

Examples:

- The agent retrieves a correct revenue figure from one report and a correct cost figure from another, but they correspond to different fiscal quarters

- Market share data from one source uses a different geographic scope than the total addressable market figure from another

- Headcount from an HR system is combined with productivity metrics from a finance report, but the HR data includes contractors while the finance data does not

Mitigation approaches:

- Explicit provenance tracking that tags every value with its source, time period, scope, and methodology

- Compatibility checks before combining values from different sources

- Structured output formats that display the metadata of each component alongside the synthesized result

Cross-Cutting Principles

Silent failure is the default

Unlike traditional software that throws errors, these ambiguities produce output that looks reasonable and reads confidently. The mitigation strategy cannot rely solely on making the agent more capable — it must also make the agent's reasoning and sourcing transparent, so that a human can audit the chain from request to final output.

Cumulative confidence decay

If each of the six stages operates at some accuracy rate, overall reliability is approximately the product of those rates. This framing makes explicit that even modest per-stage improvements yield significant gains in end-to-end reliability — and conversely, that a single weak stage can undermine an otherwise strong pipeline.

| Per-Stage Accuracy | End-to-End Reliability (6 stages) |

| 99% | ~94% |

| 95% | ~74% |

| 90% | ~53% |

| 85% | ~38% |

Feedback loops: learning from downstream failures

Errors detected at stages 4 through 6 — extraction failures, semantic misinterpretations, and composition mismatches — represent some of the most valuable signal available for improving the system over time. Rather than treating each failed interaction as an isolated incident, organizations should aggregate this feedback and route it back into earlier stages of the pipeline.

Why stages 4–6 specifically? These are the stages where errors are most likely to be caught during human review, because they produce concrete, verifiable outputs — a number, a label, a synthesized conclusion — that a domain expert can evaluate against their own knowledge. Earlier stages (retrieval, routing) produce intermediate artifacts that are harder to audit in isolation. Late-stage corrections therefore serve as a natural quality signal for the entire upstream chain.

How feedback should flow:

- Extraction failures (stage 4) should improve document processing pipelines. When a human corrects a misextracted table value or flags a parsing error, that correction — along with the source document layout — should feed into retraining or rule-tuning for the extraction layer. Over time, the system builds a corpus of known-difficult document formats and learns to handle them or flag them for manual extraction.

- Semantic corrections (stage 5) should enrich ontologies and glossaries. When a user clarifies that "operating income" in a particular source excludes depreciation, or that a figure is in thousands rather than millions, that clarification should be captured as a metadata annotation on the source and as a rule in the organization's semantic layer. This directly reduces future semantic ambiguity for any agent querying that source.

- Composition errors (stage 6) should generate compatibility rules. When a reviewer identifies that the agent combined figures from incompatible scopes or time periods, that pattern should be codified as a constraint — for example, "revenue from Source A and costs from Source B require fiscal period alignment before combination." These rules accumulate into a growing set of guardrails that prevent recurrence.

Implementation considerations:

- Build lightweight correction interfaces that let reviewers flag not just that an output is wrong but which stage introduced the error and what the correct interpretation should be

- Store corrections as structured annotations linked to the source document, the query, and the agent's reasoning trace, creating a reusable knowledge base

- Periodically retrain or fine-tune retrieval embeddings, extraction models, and semantic mappings using accumulated corrections

- Track error rates by stage over time to identify which layers are improving and which remain persistently brittle — this turns the cumulative confidence decay model from a diagnostic framework into a measurable improvement dashboard

Design implications

- Invest disproportionately in stage 1. Request ambiguity is the highest-leverage intervention point because it conditions everything downstream.

- Build traceability into every stage. Each intermediate output — the interpreted request, the chosen pathway, the retrieved sources, the extracted values, the semantic interpretation, and the composition logic — should be inspectable by the end user.

- Distinguish structured from unstructured data paths. Stages 3–5 behave very differently depending on data type. Systems should route structured and unstructured queries through different pipelines with appropriate confidence thresholds for each.

- Treat confidence as a first-class output. The agent should report not just an answer but a confidence assessment informed by which stages involved ambiguity, flagging cases where human review is warranted.

- Close the feedback loop from late-stage corrections. Errors caught at stages 4–6 are high-signal and should systematically flow back to improve extraction pipelines, semantic layers, and composition guardrails, turning every human correction into a durable system improvement.

About the Author

Dr. Rohit Aggarwal is a professor, AI researcher and practitioner. His research focuses on two complementary themes: how AI can augment human decision-making by improving learning, skill development, and productivity, and how humans can augment AI by embedding tacit knowledge and contextual insight to make systems more transparent, explainable, and aligned with human preferences. He has done AI consulting for many startups, SMEs and public listed companies. He has helped many companies integrate AI-based workflow automations across functional units, and developed conversational AI interfaces that enable users to interact with systems through natural dialogue.

You may also like